Download The Determinism Spectrum Framework: Classify your AI system in under 10 minutes and match your testing strategy to your AI system.

While AI innovations and development are advancing at full speed, it seems testing approaches for these systems remain largely traditional.

In deterministic systems, testing yields black-and-white results. However, AI systems are more probabilistic; traditional testing methods are not the most suitable vantage point for gaining a full perspective on AI system outcomes.

AI testing mistakes are more commonplace than you realize. Here’s a scenario playing out in enterprises right now:

A marketing team deploys generative AI to create email campaigns. The system works beautifully: creative, on-brand, engaging. Then QE runs their standard tests to find 100 identical prompts. The outputs vary. QE flags “inconsistency” as a critical defect.

The AI project stalls.

The QE team wasn’t wrong to test for consistency. They were testing the wrong kind of AI system.

According to recent NTT Data research (2025), 75%-80% of AI deployments fail to reach their expected outcomes. Teams either over-test creative systems (flagging natural variance as defects) or under-test deterministic systems (accepting unacceptable variance as “just how AI works”).

The core issue is that not all AI systems should behave the same way, and they can’t be tested the same way either.

Talk to our AI Quality experts

Not sure where to begin? Book a 30-Minute AI Quality Engineering Consultation with us and review your AI system architecture and use cases with our AI Quality experts and identify your highest-risk quality gaps

Let’s break down the three most common mistakes.

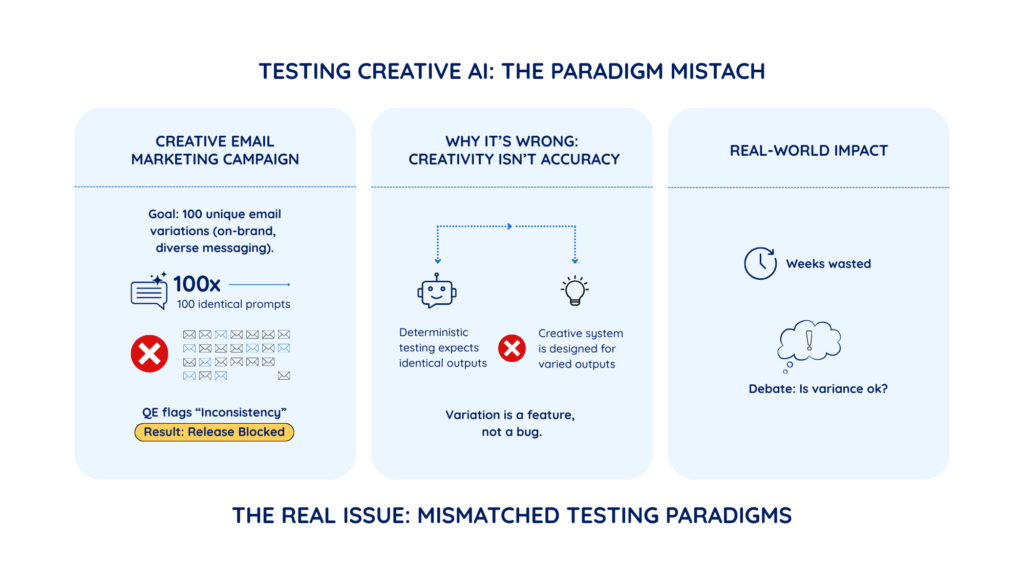

Your marketing team deploys AI to generate variations for email campaigns. The goal: create 100 different emails that are all on-brand but varied enough to test which messaging resonates.

Your QE team runs 100 identical prompts. They get 100 different outputs. They flag “inconsistency” as a critical defect and block the release.

The system was designed to be creative. Variation was the goal, not a bug.

This is like testing a human copywriter by asking them to write the same email 100 times and calling it a failure when each version is different. The “defect” QE flagged is actually the feature the marketing team needs.

The result? Projects stall while teams debate whether variance is acceptable, wasting weeks arguing over a testing mismatch.

You’re applying deterministic testing (expecting identical outputs) to a creative system (designed for varied outputs).

Download The Determinism Spectrum Framework: Classify your AI system in under 10 minutes and match your testing strategy to your AI system.

Talk to our AI Quality experts

Not sure where to begin? Book a 30-Minute AI Quality Engineering Consultation with us and review your AI system architecture and use cases with our AI Quality experts and identify your highest-risk quality gaps

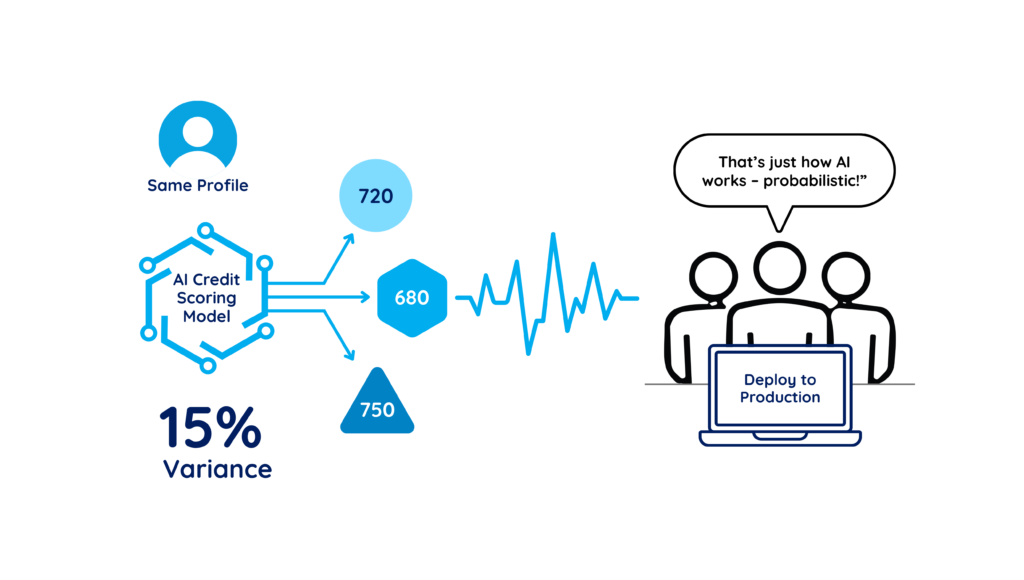

Your credit-scoring AI shows a 15% variance when testing the same customer profile multiple times. Some runs return 720, others 680, others 750.

When flagged, someone says: “That’s just how AI works; it’s probabilistic.” The team accepts the variance and moves to production.

Credit scoring must be exact. The same customer profile should always produce the same score. If it doesn’t:

This isn’t “AI being probabilistic.” This is a configuration error, model drift, or architectural flaw.

You’re treating a deterministic system (must be 100% reproducible) like it’s a probabilistic system (some variance is acceptable).

Also Read: The 5 Dimensions of AI Quality: A Guide to Scaling AI from Pilot to Production

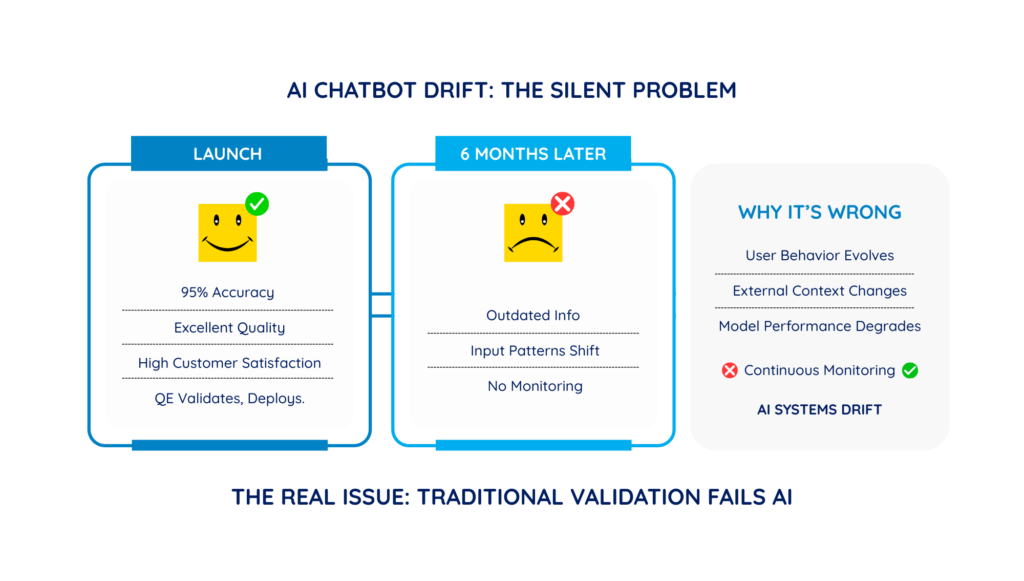

Your AI chatbot works beautifully at launch. QE thoroughly validates it: 95% accuracy, excellent quality, and high customer satisfaction.

You deploy. You move on to the next project.

Six months later, customer complaints spike. The chatbot has become verbose, sometimes gives outdated information, and occasionally misunderstands simple questions. No one was monitoring it.

AI systems drift over time; it changes even when you don’t touch it.

One-time validation catches launch issues. It won’t catch drift six months later.

You’re applying a one-time validation (a traditional software approach) to systems that require continuous monitoring (AI systems that drift).

These three mistakes share a common problem: teams apply traditional software testing principles to all AI systems without adapting for how AI actually behaves.

Traditional software testing assumes:

But AI systems don’t all work this way. Some AI must be deterministic (e.g., credit scoring), while others are designed to vary (e.g., creative content). All of them drift over time.

The solution isn’t more rigorous testing. It’s more precise testing based on what your AI system is designed to do.

Talk to our AI Quality experts

Not sure where to begin? Book a 30-Minute AI Quality Engineering Consultation with us and review your AI system architecture and use cases with our AI Quality experts and identify your highest-risk quality gaps

At Zuci, we’ve developed two frameworks that solve these testing mismatches:

Together, these frameworks give you a clear, defensible testing strategy that satisfies QE teams, regulators, and business stakeholders.

The Determinism Spectrum classifies AI systems into four zones based on how predictable their outputs should be:

AI systems in this zone must produce identical outputs for identical inputs. For example, credit scoring, tax calculation, and rule-based fraud detection systems.

These systems reason based on context. For example, ML-based fraud-detection and risk-assessment systems.

These systems generate recommendations that humans act on. For example, content generation, chatbots, and AI summarization systems.

These systems generate creative, open-ended outputs. For example, marketing ideation, design generation, and brainstorming tools.

Download The Determinism Spectrum Framework: Classify your AI system in under 10 minutes and match your testing strategy to your AI system.

This framework evaluates your AI systems for what they are designed to do.

Your marketing email system is Zone 3 or 4. Stop testing for reproducibility. Instead, test for brand alignment, bias, and consistency in quality.

Your credit scoring system is Zone 1. Demand 100% reproducibility. Any variance is a defect that must be investigated immediately.

Your chatbot is in Zone 3. It will drift. Set up monthly monitoring with human evaluation sampling to catch degradation before users do.

19 questions. 10 minutes. One personalized report.

Benchmark your AI across 7 quality dimensions and discover what’s blocking production confidence.

📖 Read the complete framework: The Determinism Spectrum: A Framework for Classifying AI Systems

Once you know your system’s zone, you need to know WHAT to test. The Five Dimensions framework defines quality across:

This framework helps you understand which parameters to test in your AI system.

Stop testing Zone 3/4 systems for Reproducibility. Focus on Bias and Explainability instead.

Zone 1 systems must score perfectly on Reproducibility and Factuality. No exceptions.

All zones need continuous Drift monitoring. Your monitoring frequency depends on your zone (weekly for Zone 4, monthly for Zone 3, quarterly for Zone 1-2).

📖 Read the complete framework: The Five Dimensions of AI Quality: A Practical Guide

By utilizing Zuci’s AI classification framework and Five Dimensional testing methodologies, it is possible systematically remove testing mismatches and errors. This integrated approach helps you evaluate your AI using the precise metrics and parameters aligned with its specific function.

Which of these sounds familiar?

Talk to our AI Quality experts

Not sure where to begin? Book a 30-Minute AI Quality Engineering Consultation with us and review your AI system architecture and use cases with our AI Quality experts and identify your highest-risk quality gaps

Does your system give different answers to the same question?

Once you know your zone, you know what to test:

| Your Zone | Test For | Don’t Test For |

| Zone 1 | Reproducibility + Factuality | Creative variance |

| Zone 2 | Factuality + Drift | Minor score variations |

| Zone 3 | Bias + Explainability + Drift | Output reproducibility |

| Zone 4 | Bias + Explainability | Creative diversity |

Need expert help?

Book a 30-minute strategy session with our AI quality engineering team to:

- Classify your AI systems accurately

- Identify testing gaps and mismatches

- Build a zone-specific testing roadmap

Zuci Systems is an AI-first digital transformation partner specializing in quality engineering for AI systems.

Named a Major Contender by Everest Group in the PEAK Matrix Assessment for Enterprise QE Services 2025 and Specialist QE Services, we’ve validated AI implementations for Fortune 500 financial institutions and healthcare providers.

Our QE practice establishes reproducibility, factuality, and bias detection frameworks that enable enterprise-scale AI deployment in regulated industries.

At Zuci, we’ve applied the 5 dimensions of AI to help teams move from AI pilots to production with confidence.

Our QE for AI services spans:

Explore our QE for AI services.

Start unlocking value today with quick, practical wins that scale into lasting impact.

Thank you for subscribing to our newsletter. You will receive the next edition ! If you have any further questions, please reach out to sales@zucisystems.com