As AI-powered software delivery gains cadence in the industry, enterprises must simultaneously evolve the processes that go into validating it. Quality engineering in AI moves from a supporting activity to a core discipline for digital resilience.

The 17th edition of the World Quality Report 2025-26 underscores this shift. As code generation and automation accelerate, organizations must also demand validation with equal fervor. This report highlights a big gap: while 15% of the organizations have successfully scaled their AI, 43% remain in the experimental phase.

It directly highlights the modern challenges in the existing QE practices: how to move AI from pilots to production. Let’s take a look at some of the most important quality engineering trends for 2026.

Interestingly, the landscape of quality engineering in AI seems bifurcated so far. On the one hand, you clearly see the surge in the adoption of generative AI and related implementations for tactical tasks. On the other hand, there is a cautious “Let’s wait and see” sentiment approach when it comes to autonomous agents.

Up until now, enterprises focused on “Testing as a Service” to describe AI and its adoption. However, the Everest Group Enterprise QE Services PEAK Matrix 2025 highlights a crucial market trend rising in 2026: enterprises are now considering engineering-led assurance as a high-priority for AI adoption.

This shift also shows up in the 17th World Quality Report 2026, recounting how “Quality for AI” and “AI for Quality” are now being budgeted as distinct, high priority workstreams.

The industry is evidently moving towards a “Quality, not Quantity” mantra. Instead of focusing on volume of automated test cases, leaders are emphasizing outcome-linked indicators such as release predictability, production stability, and business value alignment.

AI is removing old bottlenecks and accelerating workflows by leveraging well-defined QE strategies that serve as the final guardrail to ensure high-quality results. Here are the top QE trends that are set to transform QE, AI, and output quality in 2026:

According to the World Quality Report, while 95% of organizations utilize GenAI for test data, only 10% have been able to embed this technology into their development lifecycles. GenAI still remains limited to project-based tasks with a limited scope rather than being leveraged to its full potential as a strategic partner.

In 2026, that changes. AI is no longer an add-on to existing QE processes; it becomes the foundation of how quality is designed, executed, and improved.

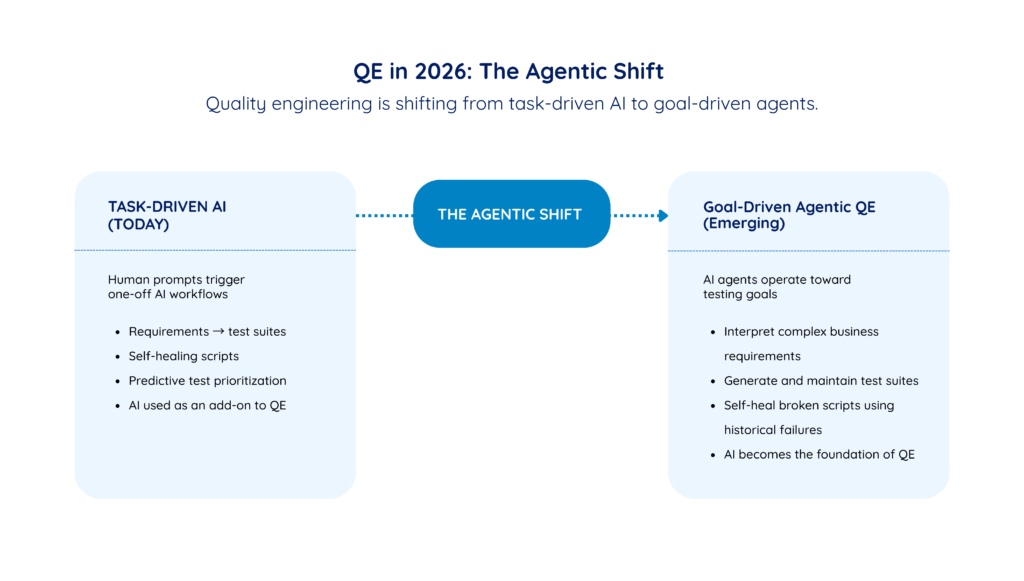

Enterprises are moving from task-driven AI (a human triggers a one-off AI workflow with a prompt) to goal-driven, agentic AI. Intelligent AI agents can now:

This shift significantly reduces manual test maintenance effort and accelerates test cycles.

One of the key components that drives this trend is the use of systems to predict high-impact regressions. In simpler words, agentic AI systems are able to prioritize “what gets tested” by thoroughly analyzing historical code changes and defect patterns.

This ensures that resources are allocated to the areas of highest risk, making the entire QE process efficient. For QE engineers, there is a shift in roles from writing scripts to governing, curating, and optimizing these autonomous workflows.

The trend highlights that the industry is beginning to consider using AI as a “Design architect” for shaping inputs like requirements and test design. AI may soon be able to go beyond the question “how to report” and understand “what to test”.

Enterprises stand at the cusp of change now; this is the right time to bridge the pilot-to-production gap by empowering strategy with execution and converting theory into action:

Enterprises that want to benefit from AI-driven QE must treat requirements, test cases, runs and production incidents, as a governed asset. The stronger this foundation, the more effectively AI agents can design tests, self-heal suites and prioritize coverage where it truly reduces risk.

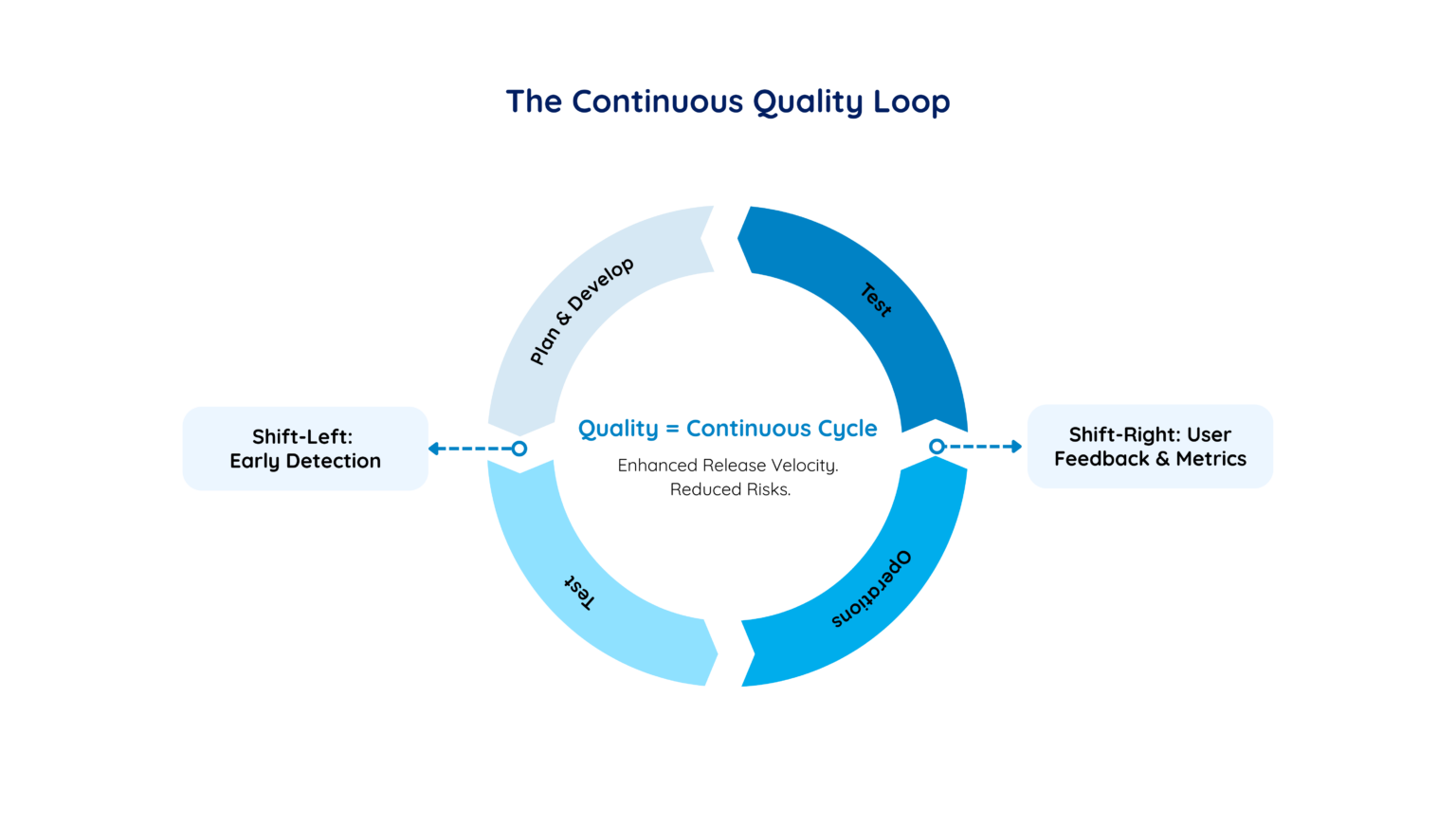

The second major trend consists of rapidly blurring boundaries between development, testing, and operations. “Quality” has become a continuous, cyclical practice instead of being a milestone in the software cycle.

Working in tandem, shift-left and shift-right inform the testing strategies of the next release, creating a continuous quality loop. It helps enhance the release velocity and mitigates the risk of production failures.

Continuous quality requires QE leaders to operate in a “two-speed” model:

Traditional, determinism-oriented testing methods are proving inadequate to truly gauge the output quality of AI systems. Until recently, QE focused on deterministic systems where the inputs were fixed, and outputs were guaranteed. However, AI’s probabilistic nature introduces variability that determinism cannot properly judge.

New trends suggest a redefinition of quality, from verifying binary correctness to making less “predictable” systems more trustworthy.

This shift emphasizes that testing is no longer the final gatekeeper for AI quality. Instead, QE now functions as a continuous scaffolding integrated into the entirety of AI lifecycle. Success is now measured through probabilistic intervals instead of pass/fail results, and it requires new metrics such as factuality rates, bias indices, and determinism percentages.

Strategy fragmentation is an issue of structure, not testing.

What does “AI quality” actually mean in measurable terms?

Explore how the Five Dimensions of AI Quality framework helps enterprises evaluate trust, reproducibility, and production reliability across AI systems.

The 5 Dimensions of AI Quality: A Guide to Scaling AI from Pilot to Production →

AI does not behave like traditional software. So how do you test it?

Understand how the Determinism Spectrum reframes validation for probabilistic systems.

Read: The Determinism Spectrum: Why AI Can’t Be Tested Like Just Another Software →

Quick Check: How reliable is your AI output?

Take our 10-minute AI Output Quality Assessment and get an instant personalized report showing:

- Your overall AI Quality Score with maturity status

- Dimension-level breakdown across 7 key areas

- Practical, tailored recommendations for each dimension

- A clear roadmap to scale responsibly

As quality engineering undergoes an organizational transformation, “quality engineering” changes from something enterprises use to find bugs to something that ensures business resilience, protects revenue, and maintains customer trust.

Traditional QE metrics like test coverage and defect counts are no longer enough. Leaders now look at outcomes such as predictability of releases, production stability, user adoption rates, and the reliability of AI‑driven experiences.

Quality is now embedded within cross-functional squads through service providers that maximize business value using effective AI implementation and platformization.

The whole process of incorporating quality at the heart of AI model testing defines the modern QE for AI.

From defect detection to delivery confidence

See how Zuci helped a healthcare platform scale quality with intelligent testing and release confidence.

Probabilistic AI breaks in ways traditional testing was never built to catch.

Most teams apply deterministic methods to probabilistic models — and miss the failures that matter most. Here are three mistakes to fix before your next production release.

With the evolution of QE for AI, the role of QA engineer is expanding. As AI takes over repetitive script generation and execution, the traditional “QA Engineer” now changes to “AI Orchestrator” or “Strategic Business Alignment Partner”.

This shift in roles can be attributed to the move from deterministic testing to probabilistic evaluation of AI systems. Since the “why” and “how” of an AI model’s reasoning matter just as much as the output, the required human skillset is also shifting from manual bug detection to AI/ML model evaluation, API observability, and business risk management.

Referring to the Everest Group PEAK Matrix, the “Major contenders” in the QE system are those trailblazers that successfully and timely upskill their talent in AI/ML, API observability, and business risk management. Currently, only 53% of testers possess AI and ML skills, hinting that the demand for skilled testers is higher than supply.

Enterprises should move ahead of the “AI hype” and replace random automation with validated outcomes, a skilled workforce, and a map for future growth and leverage.

The future QE professional is not replaced by AI; they are the human layer that ensures AI-powered systems behave safely, reliably, and in line with business objectives.

Organizations aiming to “thrive, not just survive” in this emerging QE in AI ecosystem must look beyond tools and think about governance and resilience:

One of the key trends in quality engineering in AI is the evolution of test data and continuous QA loops. It is important to know how to establish AI quality for its probabilistic nature by shifting from verifying correctness to quantifying confidence.

Read Next: QE for AI: Determinism-Based Quality Assurance for AI Systems

Determining AI quality goes beyond testing: it relies on understanding the principles that govern AI behavior and assess the system holistically. Learn how you can evaluate your AI systems for quality outputs with Zuci’s Five Dimensions of AI quality that empower you to scale your AI from pilot to production.

Read Next: AI Quality Checklist: A Five Dimensions Approach to AI Accuracy

If you are ready to begin with AI, book a consultation with Zuci Systems to harness the true power of AI for your enterprise’s specific needs.

Book a consultation: Zuci Systems helps enterprises realize the full potential of their AI

If your organization is navigating the shift to AI-driven software delivery, Zuci Systems can help.

Zuci Systems is an AI-first digital transformation partner specializing in enterprise-grade AI agent design and multi-agent orchestration. We help Fortune 500 companies in banking, insurance, and healthcare design and deploy AI systems that are predictable, explainable, and production-ready.

Our approach combines structural discipline (7 design principles), intelligence design (PRIMAL Core framework), and enterprise controls (Trust Layer) to create agents that work reliably in regulated, high-stakes environments.

Contact: connect@zucisystems.com | www.zucisystems.com

Start unlocking value today with quick, practical wins that scale into lasting impact.

Thank you for subscribing to our newsletter. You will receive the next edition ! If you have any further questions, please reach out to sales@zucisystems.com