Reading Time : 2 Mins

How to Streamline Data Labeling for Machine Learning: Tools and Practical Approaches

I write about fintech, data, and everything around it

This is a concise guide to help you solve the problem of data labeling pain. It introduces several tools and practical approaches that you need to know to streamline your process.

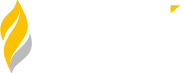

Artificial Intelligence and Machine Learning are currently being used in almost every industry. 48% of businesses are already using machine learning and data analysis in some capacity, whereas 65% are planning to adopt for improving decision-making. It offers numerous benefits, including enabling machines to learn from past data and make decisions. It does so by analyzing large chunks of data, data extractions, and interpretations. This is why data labeling plays a crucial role in machine learning.

Data labeling is a crucial and central part of the data preprocessing workflow for machine learning. It structures the data to make it useful and meaningful. This labeled data is then used to train machine learning systems to discover ‘meaning’ in fresh, pertinently related data.

And to help you provide a better understanding of it, we have come up with this definitive guide. It covers the importance of data labeling for machine learning and the tools and approaches you need to know.

What is Machine Learning Data Labeling?

Data labeling for machine learning is the process of adding target properties to training data and labeling them. In other words, data labeling is the process of adding labels to raw data such as texts, images, videos, and audio. It is done so so that a machine learning model understands what predictions are expected of it.

When data is “labeled” in ML, it means that the target—the prediction you want your machine learning model to make—has been highlighted or annotated in the data. Data labeling is a broad term that refers to a variety of tasks including annotation, classification, data tagging, moderation, processing, and transcription.

In the context of banking and financial institutions, for instance, data labeling helps generate actionable insights using enormous databases that banks collect. It also helps them identify relevant information and assess the risk associated with dealing particular entity.

Why is Data Labeling Important?

In order to sort through data and create an appropriate training model, ML and deep learning require data labeling. The quality of the algorithm and the training model are the only factors that affect AI systems. This implies that the caliber and volume of the data supplied determine the basis of an effective AI system. This helps an AI model to learn and accomplish its goals effectively and seamlessly. Data labeling is also important because it helps AI and ML algorithms accurately understand the environments and situations that exist in the real world.

Data Labeling Approaches For Machine Learning

Data labeling for machine learning is a tough endeavor, but it is one of the most important stages of supervised learning. The data processing necessitates the mapping of goal properties from historical data by a human before an ML algorithm can locate them. To that end, data labelers must be meticulous because even the tiniest inaccuracy has the ability to degrade the quality of the datasets, which will then have an impact on how well the ML model performs overall.

There are numerous approaches that data labelers can adopt to accomplish data labeling. A company’s ability to dedicate the necessary time and expenses for a project depends on the complexity of the problem and training data, the size of the data science team, and the choice of approach.

Here are some of the best approaches data labelers can use to annotate data for their predictive models:

In-house labeling

If your organization has enough resources, staff, and time, in-house labeling is the best solution. Data scientists and data engineers employed by the company often do in-house data labeling, which guarantees the best possible level of labeling. For sectors like insurance or healthcare, high-quality labeling is essential, and it frequently necessitates meetings with specialists in related professions.

Automating data labeling with semi-supervised learning boosts productivity. In this training technique, data with and without labels are both utilized. For initiatives in a range of industries, including finance, space, healthcare, and energy, expert data assessment is typically necessary. Teams seek advice from subject matter experts about the fundamentals of labeling. Sometimes, data sets can only be labeled by expert data scientists or data engineers of the organization.

Advantages:

With in-house labeling, also referred to as internal labeling, you have complete control over the procedure and can provide dependable findings. When labeling data, adhering to the timetable is essential, and being able to monitor the team’s progress at any moment to make sure they are on track is invaluable.

Disadvantages:

A significant drawback of in-house labeling is how slowly it moves. It is true that excellent things take time, and this situation is a prime example. For high-quality datasets, your team will require time to carefully classify the data. Of course, this only applies if your project is too large for your team to complete quickly.

Crowdsourcing

Crowdsourcing refers to the method of collecting annotated data with the help of a sizable number of independent contractors registered at a crowdsourcing platform. By doing so, crowdsourcing platforms eliminate the need and requirement of hiring new talents. Therefore, systems with tens of thousands of registered data annotators are frequently used to crowdsource the work of annotating a basic dataset.

Advantages:

Crowdsourcing is useful for data labelers who have big tasks to complete but very limited time. This approach helps you draw desired outcomes fast, and saves time and money since it is equipped with strong data tagging tools.

Disadvantages:

Crowdsourcing is not exempt from the delivery of labeled data of inconsistent quality. To accomplish as many jobs as possible on a platform where workers’ pay is based on the number of activities they do each day, workers are prone to disregarding task recommendations.

Outsourcing to Individual

Outsourcing is a middle ground between in-house data labeling and crowdsourcing in which the job of data annotation is delegated to a company or a person. The ability to evaluate a person’s knowledge in a given subject before work is handed over is one benefit of outsourcing to individuals. For initiatives that don’t have a lot of funds yet need high-quality data annotation, this strategy of building up annotation datasets is ideal.

Advantages:

With this approach, you get the ability to speak with the freelancers and discover more about their areas of specialization, giving you the knowledge you need to make an informed hiring decision.

Disadvantages:

For freelancers to fully comprehend the jobs they are assigned, you may need to design your task interface or template and take the time to offer detailed and precise instructions.

Outsourcing to Companies

You can get in touch with outsourcing organizations that specialize in training data preparation rather than using temporary workers or a crowd. There are readily available and easily accessible outsourcing businesses with a focus on data labeling for machine learning. These businesses offer you high-quality training data since they are well-equipped and use highly qualified personnel.

These groups advertise themselves as alternatives to crowdsourcing websites. Companies highlight that high-quality training data will be delivered by their qualified workforce. The team working for the customer can then concentrate on more difficult tasks. Therefore, working in conjunction with outsourcing firms is like having an external crew for a while.

Advantages:

Outsourcing companies and organizations guarantee their employees can produce high-quality solutions.

Disadvantages:

As useful as this approach is, it sometimes can get expensive to adopt. Most companies do not include a breakdown of the cost per project, which can prove to be costly for you.

Synthetic Labeling

This method involves producing data that, in terms of key parameters chosen by a user, closely resembles real data. In synthetic data labeling, synthetic data is produced with a generative model trained and tested on an initial dataset. It is possible to employ synthetic labeling while developing ML models for applications requiring object recognition. For instance, extensive training datasets and skilled labelers are needed for difficult jobs. In addition, producing a labeled dataset is the ideal choice since such a big quantity of labor often has a quick turnaround time.

There are three types of generative models that synthetic labeling utilizes. They are as follows:

- Generative Adversarial Networks: A zero-sum game framework is used by GAN models to combine generating and discriminative networks. In the latter, a generative network generates data samples. In contrast, a discriminative network (trained on actual data) attempts to determine whether they are genuine (came from the true data distribution) or produced (came from the model distribution). The game continues until a generative model receives enough input to be able to make images that are identical to genuine ones.

- Autoregressive Models: A linear combination of the variables’ prior values is used by AR models to produce new variables. When generating images, ARs build each pixel individually based on the pixels above and to the left of it.

- Variational Autoencoders: By encoding and decoding input, Variational Autoencoders (VAE) generate fresh data samples. A Variational Autoencoder offers a probabilistic way to describe an observation in latent space. As a result, rather than creating an encoder that produces a single value to represent each latent state characteristic.

Advantages:

Time and money are saved with synthetic labeling since data can be easily created, adjusted, and updated for certain activities, as well as to enhance the model. Additionally, non-sensitive data can be used by data labelers without needing to seek authorization.

Disadvantages:

High-performance computing is required for this approach. High computational bandwidth is needed for rendering and further model training that goes into synthetic labeling. Second, historical and synthetic data similarity may not always be guaranteed. As a result, ML models developed using this method need to be trained again using actual data.

Data Programming

Human labeling is fully eliminated by data programming. This method labels the data with labeling functions. For the purpose of training generative models, a dataset created using data programming methodology can be employed.

Data programming entails writing labeling functions and scripts that label data using programs. Users acknowledge that the generated labels may not be as precise as those produced by manual labeling. However, inadequate supervision of final models with good quality may be done using a noisy dataset produced by the tool.

Advantages:

A data analysis engine can automatically label the data without the requirement for human manpower.

Disadvantages:

The dataset’s quality and the overall effectiveness of the ML model are subsequently compromised by this approach’s propensity to produce fewer accurate data labels.

Pros and Cons of Data Labeling Approaches

Data labeling is one of the most important steps in the data science process. It’s also one of the most tedious and time-consuming. Here are the pros and cons of data labeling approaches:

| Approach | Description | Pros | Cons |

| In-house/Internal Labeling | An expert within the internal data science team labels the data. |

|

|

| Synthetic Labeling | A form of data labeling generated through real data based on the standards and real-world patterns |

|

|

| Crowdsourcing | Use a crowdsourcing platform with an on-demand workforce instead of a data labeling company |

|

|

| Outsourcing to Individuals | Outsource work to skilled and experienced freelancers |

|

|

| Outsourcing to Companies | Outsource labeling to readily-available outsourcing companies specialized in data labeling for machine learning |

|

|

| Data Programming | Labeling data by creating scripts and programs to avoid manual work |

|

|

Data labeling tools

Generating high-quality labeled data requires time, effort, and investment. You will want data labeling tools if you’re creating a machine learning model to efficiently assemble datasets and guarantee high-quality data creation. The data labeling tools are easy to use, need little human interaction, and increase productivity while maintaining a high level of quality.

There are several pre-built labeling solutions for desktop and browser use. You may select the one that’s perfect for you and forgo expensive and time-consuming software development if the functionality they offer meets your expectations.

Image Labeling Tools

The process of recognizing and naming particular elements inside a picture is known as image labeling. Some of the best tools for image and video labeling are as follows:

Image Labeling Tool 1 – Annotorious

Annotorious is a JavaScript image annotation library that adds unique comments, notes, and tags to a particular area of an image. The MIT-licenced tool allows comments and drawings to add to website picture files. It does so with just two lines of new code. Users can also explore the other functionalities of the tool and carry out various annotation activities.

Annotorious is flexible, extensible, and interoperable. For seamless web annotation, the tool is based on the W3C standards. It allows you to build your own plugins and editor extensions and write formatters to apply rule-based annotation styles. It integrates anywhere and works in the browser with no server-side dependencies. With a rich and abundant JavaScript API, you can also create custom annotation apps. It is free to use.

Image Labeling Tool 2 – LabelMe

LabelMe was created by MIT using an open-source format, and it is one of the most well-known image labeling tools in the market. The polygonal method of labeling is its best method. The functionality of the tool is represented by the Labels and Detectors galleries. The former is employed for picture gathering, tagging, and storage. The latter enables the training of real-time object detectors.

LabelMe’s creators intended to cater to mobile customers and developed the corresponding app. It’s accessible on the App Store.

Image Labeling Tool 3 – Sloth

Sloth is a free and versatile tool that helps annotate video and image files for use in computer vision research. A frequent use case for Sloth is face recognition. You can use Sloth to design software that can follow and precisely identify a person from surveillance cameras or determine whether they have already been featured in records.

Image Labeling Tool 4 – VoTT

Visual Object Tagging Tools is yet another powerful image annotation tool. VoTT is developed by Microsoft and has an interactive and user-friendly design that makes it simpler for users to learn the tool’s numerous operations and features. The tool makes it simple for users to build a project without delving into the specifics of the documentation. Deep learning methods are used to quickly and accurately recognize objects in VoTT, which is implemented in the clean React language. VoTT is accessible as both an electronic app and a web app.

Image Labeling Tool 5 – LabelIMG

LabelIMG is an open-source, free image labeling application that is extremely simple to install in Windows operating systems because it already includes built-in binaries. This tool’s advantage of being offline makes labeling photographs and retrieving stored images easier and faster. Other than that, it is a fairly basic tool without any sophisticated features. Furthermore, it only accepts bounding boxes; no other labeling techniques are supported.

Other than these 5 tools, you can also explore RectLabel, ImageTagger, SentiSight, VGG Image Annotator, Supervise.ly, BeaverDam, LabelBox, ImgLab, and ViPER-GT for image and video labeling.

Text labeling

In machine learning, text labeling is the process of identifying text files and adding one or more meaningful and informative labels so that the machine learning model can learn from them. Some of the best text labeling tools are:

Text Labeling Tool 1 – Tagtog

Tagtog, a text labeling tool with Polish roots, is widely used to manually or automatically label data. In addition to the TagTog technology, the business also has a network of knowledgeable employees from other industries that can annotate particular literature. TagTog offers the choice of manually annotating text, hiring professionals to label their data, or using automatic machine learning models.

Text Labeling Tool 2 – LightTag

LightTag is an ideal tool for labeling texts. It is designed on the foundation for the text annotation application. It allows users to control the quality of the data and that annotators are performing at their very best.

Text Labeling Tool 3 – Bella

Bella is another free tool that works extremely well. It is intended to speed up and streamline text data labeling. Normally, before training a model, experts must transform a dataset that was labeled in a CSV file or Google spreadsheets into the proper format. Bella is a wonderful alternative to spreadsheets and CSV files because of its capabilities and user-friendly interface. The key components of Bella are a graphical user interface (GUI) and a database backend for labeled data management.

Text Labeling Tool 4 – Dataturks

Dataturks is yet another tool that is widely used for training data preparation. Data teams may use its solutions to do tasks including text categorization, moderation, parts-of-speech tagging, named-entity identification tagging, and summarization.

Text Labeling Tool 5 – Stanford CoreNLP

CoreNLP is an excellent tool for labeling text data. Users may generate linguistic annotations for text using CoreNLP, such as token and sentence borders, numerical and temporal values, parts of speech, quote attributions, named entities, dependency and constituency parses, sentiment, coreference, and relations. Arabic, French, German, Hungarian, Chinese, English, Italian, and Spanish are the eight languages that CoreNLP presently supports.

Audio Labeling

There are words and sentences in a speech in an audio file that are meant for the listeners. Audio labeling makes such phrases in the audio files recognizable to machines. Some of the best audio labeling tools are:

Audio Labeling Tool 1 – Super Annotate

SuperAnnotate is a data annotation platform for audio data. It promises to speed up annotation activities by at least three times. Its sophisticated capabilities, such as automated predictions, transfer learning, and data and quality management makes it one of the best audio data labeling tool.

Audio Labeling Tool 2 –Praat

Praat is a famous and widely used free tool for annotating audio data. It allows you to record certain events that occur in the audio stream and annotate these times with text labels in a small, compact TextGrid file. As text annotations are connected to the audio file, this tool enables working with both sound and text files simultaneously.

Audio Labeling Tool 3 – Speechalyzer

Speechalyzer is a tool whose name speaks for itself. Large voice datasets may be manually processed using the tool. Developers point out that they tagged thousands of audio recordings fairly instantly as an illustration of the software’s great speed.

Final Takeaways

Data scientists recognize that high-quality data is all that matters. That is why every innovator and data scientist is embracing sophisticated ML models with tenacity. Although there are many data labeling tools on the internet, choosing the best one is a difficult challenge. Teams working on data science projects need to determine which tool, in terms of overall cost and capability, is most appropriate for a given project.

Data labelers have discovered fresh approaches for partially automating the labeling procedure, and replacing or enhancing the human labeling methods. Having said that, the development of more effective automated data labeling procedures that employ fewer humans while yet producing high-quality training datasets for ML models will be crucial in the future.

We hope you like this article and learn how data labeling is an intrinsic part of data science! Book a discovery call for our data engineering services today and get ahead of the competition. Make it simple & make it fast.

Related Posts